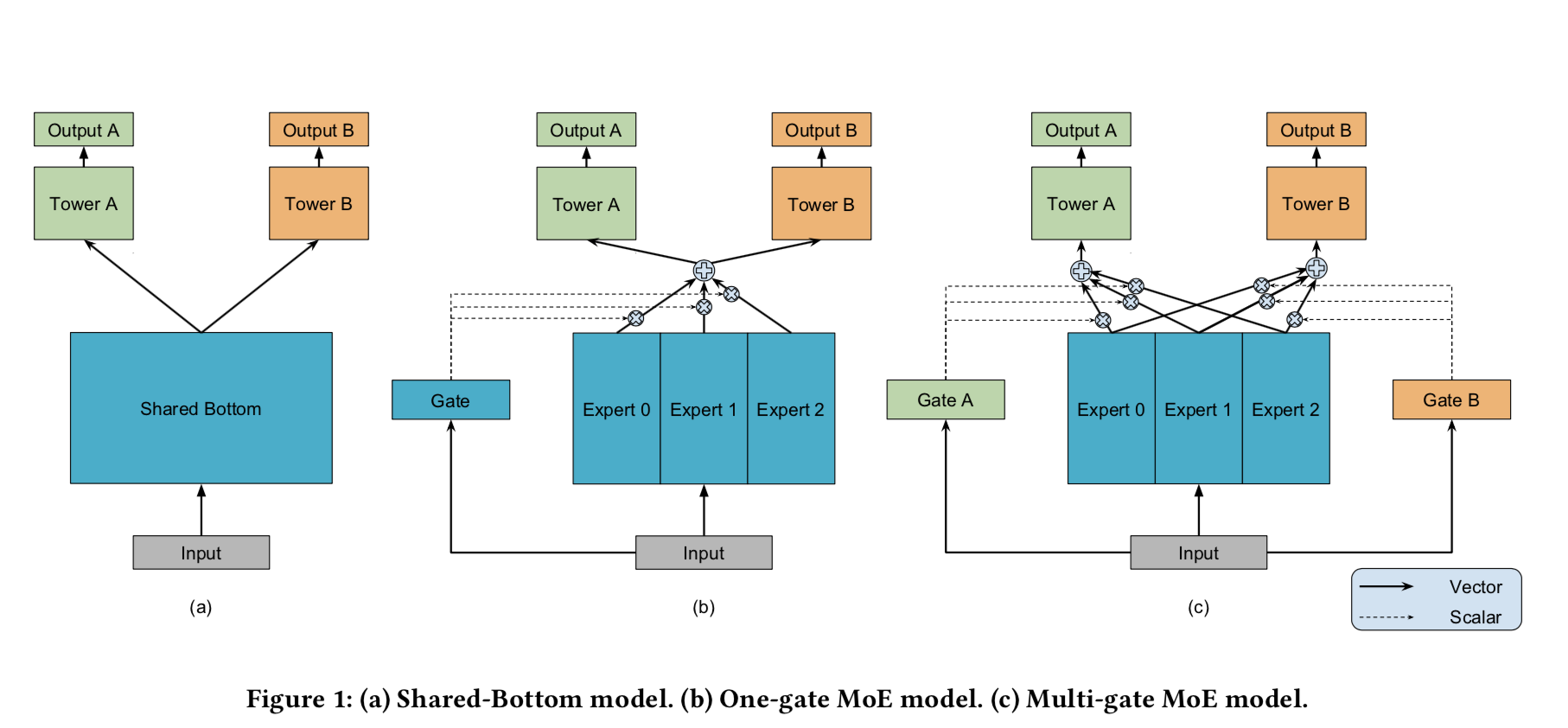

这是文章方法的核心图。所谓的Expert指的是一组新的神经网络。然后将这这些神经网络的结果通过gate组合起来再output。这里的gate有点像attention model里的attention/importance/wiehgt。

| 文献题目 | 去谷歌学术搜索 | ||||||||||

| Modeling Task Relationships in Multi-task Learning with Multi-gate Mixture-of-Experts | |||||||||||

| 文献作者 | Jiaqi Ma | ||||||||||

| 文献发表年限 | 2018 | ||||||||||

| 文献关键字 | |||||||||||

| KDD 2018; multi-task learning; mixture of experts; neural network; recommendation system; Shared-Bottom model | |||||||||||

| 摘要描述 | |||||||||||

| Neural-based multi-task learning has been successfully used in many real-world large-scale applications such as recommendation systems. For example, in movie recommendations, beyond providing users movies which they tend to purchase and watch, the system might also optimize for users liking the movies afterwards. With multi-task learning, we aim to build a single model that learns these multiple goals and tasks simultaneously. However, the prediction quality of commonly used multi-task models is often sensitive to the relationships between tasks. It is therefore important to study the modeling tradeoffs between task-specific objectives and inter-task relationships. In this work, we propose a novel multi-task learning approach, Multi-gate Mixture-of-Experts (MMoE), which explicitly learns to model task relationships from data. We adapt the Mixture-of- Experts (MoE) structure to multi-task learning by sharing the expert submodels across all tasks, while also having a gating network trained to optimize each task. To validate our approach on data with different levels of task relatedness, we first apply it to a synthetic dataset where we control the task relatedness. We show that the proposed approach performs better than baseline methods when the tasks are less related. We also show that the MMoE structure results in an additional trainability benefit, depending on different levels of randomness in the training data and model initialization. Furthermore, we demonstrate the performance improvements by MMoE on real tasks including a binary classification benchmark, and a large-scale content recommendation system at Google. | |||||||||||